Empowering the latest AI technology to prevent road collisions and to understand fleet risk

Using increasingly sophisticated AI technologies, we are automating management processes, data analysis and incident detection in a wide range of fleet, insurance, road safety and risk management settings. This is enabling vehicle operators to take advantage of smart camera solutions and use video telematics like never before.

At the forefront of AI video telematics

Our UK-based, in-house development team is creating intelligent edge-and cloud-based solutions using the latest advances in artificial intelligence, machine learning and computer vision. These AI-enabled video telematics innovations are underpinned by the functionality, scalability and capacity of Autonomise.ai.

Combining forward-and driver-facing AI technology

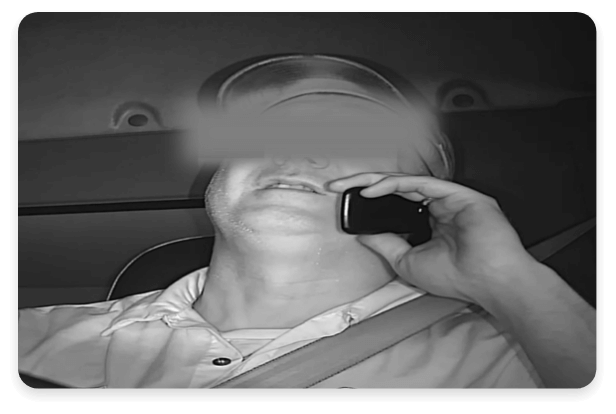

We are combining forward-and driver-facing AI camera technology to detect and help drivers self-correct dangerous or distracted behaviour. By identifying and assessing risk in and out of the vehicle, the driver can be notified of any issues and footage uploaded to Autonomise.ai for review.

Distractions such as mobile phone use, eyes away from road and smoking can be detected alongside other fleet risks, such as fatigue, tailgating and nearby vulnerable road users.

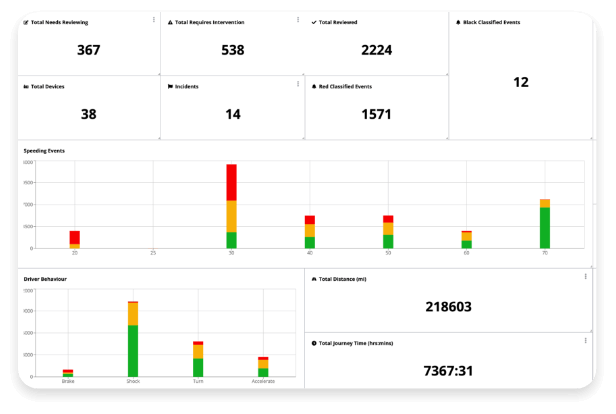

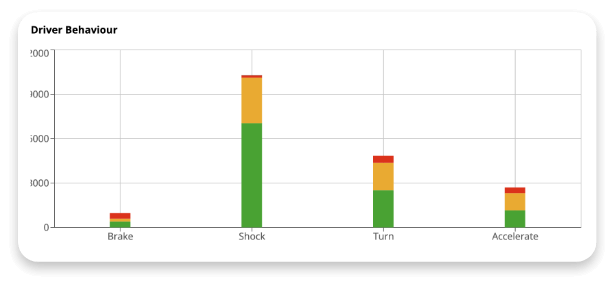

Providing unparalleled insights into driver behaviour, fleet risk and road events

Autonomise.ai’s cloud-based computer vision models provide unparalleled insights into driver behaviour, fleet risk and road events. No fleet operation has the time and resources to manually review every triggered collision, near miss or driving event, so automated post analysis of uploaded footage cuts through the noise, without the need for human intervention, to remove false positives and determine if any urgent action is required.

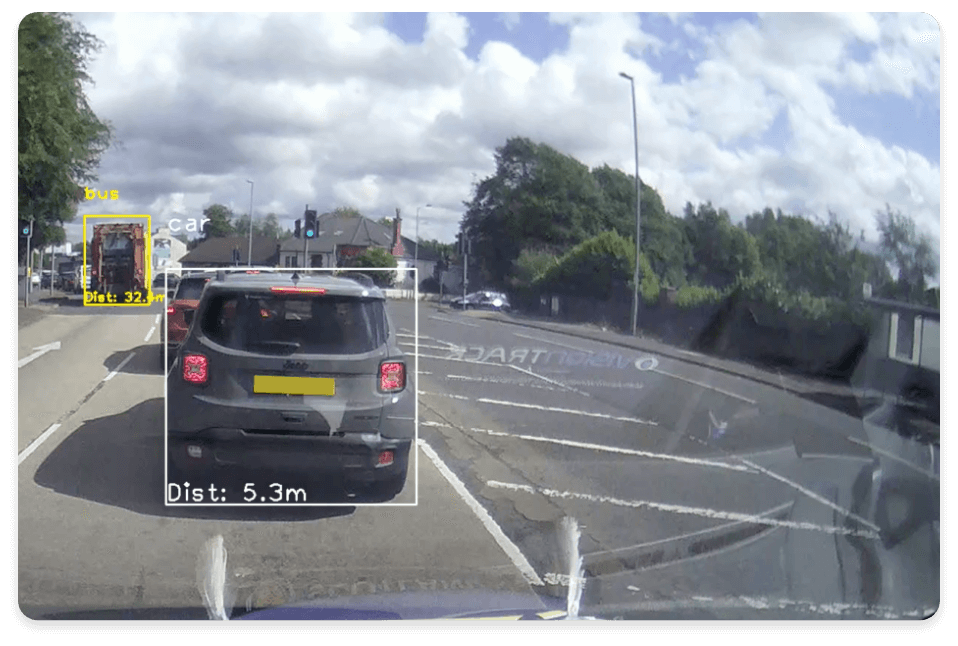

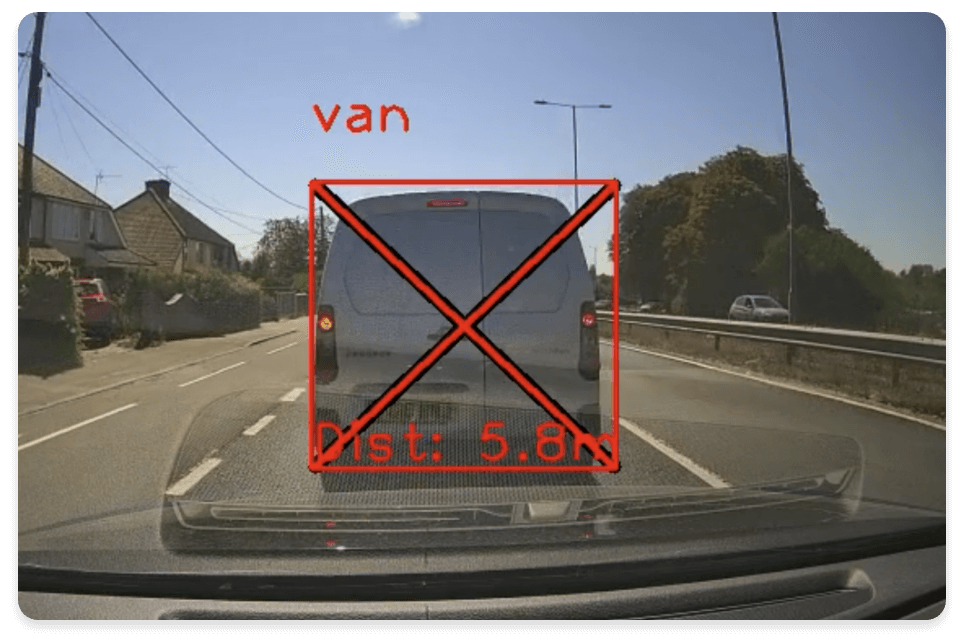

Automatically identifies vehicles, people, tailgating and crashes

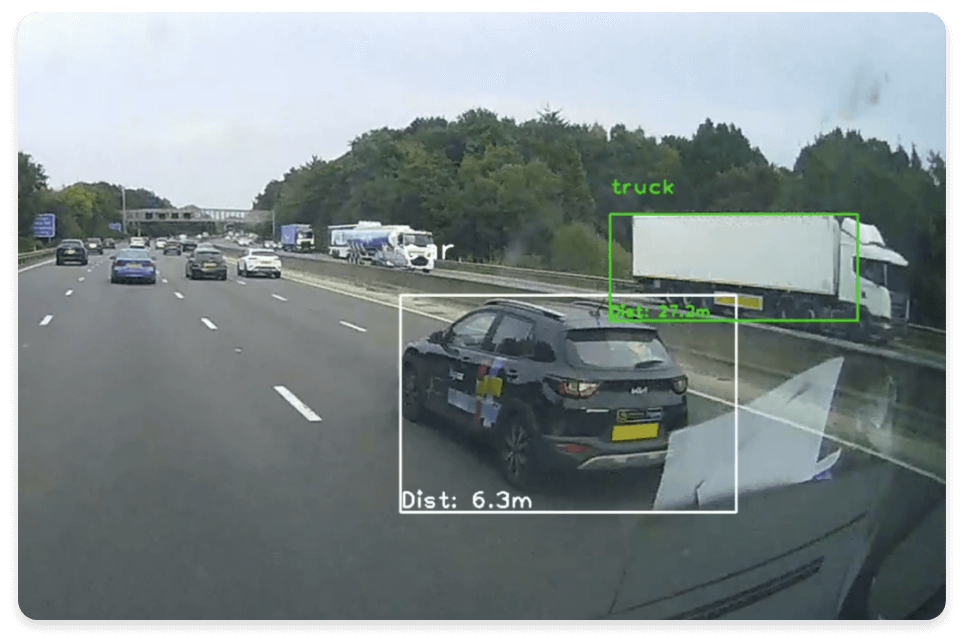

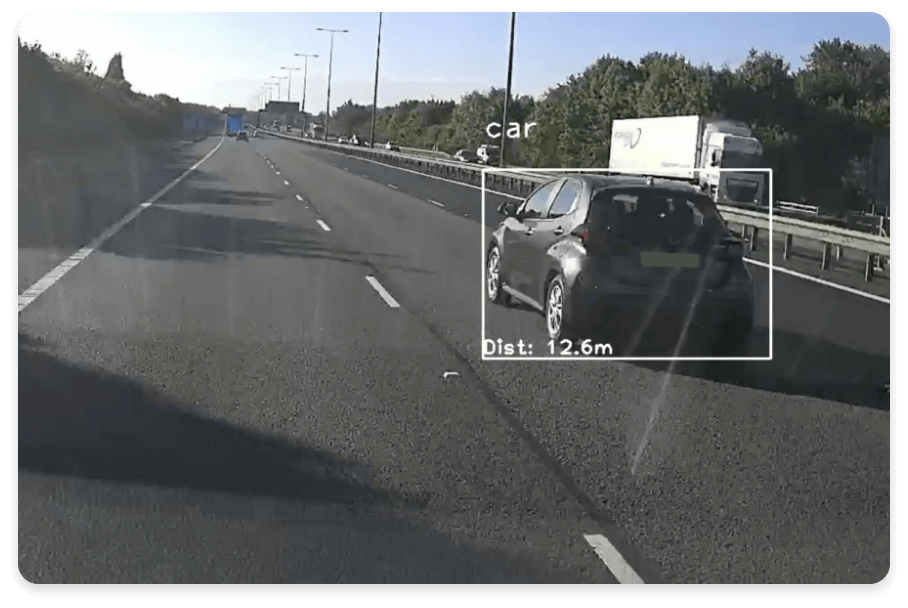

Advanced object recognition software uses deep learning algorithms to train Autonomise.ai to automatically identify vehicles, people, tailgating and crashes.

With accuracy levels already reaching 99%, the platform detects, tracks and alerts on situations involving vehicles, so it is possible to quickly validate driver welfare, take control of the claims management process, and better understand fleet risk.

Using advanced vulnerable road user perception technology

Using advanced vulnerable road user (VRU) perception technology, our edge-based software provides a nuanced understanding of human behaviour, making it possible to accurately predict a person’s actions. Backed by a dataset of 1 billion human behaviours, vehicle cameras are empowered to analyse direction, speed and distraction, enabling earlier and more accurate driver alerts than traditional ADAS technology.

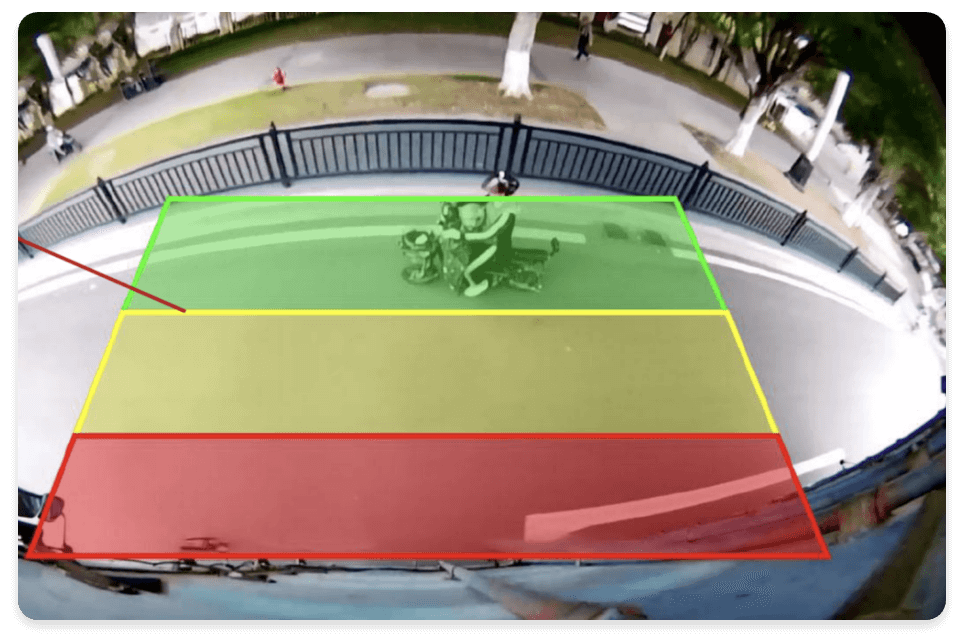

Be alerted when vulnerable people enter virtual exclusion zones around the vehicle

Our latest AI detection camera adopts deep learning technology to identify and track vulnerable people and trigger an alert to the driver when they enter virtual exclusion zones around the vehicle.

Suitable as a forward, side and rear-facing solution, the intelligent device can be linked to an in-vehicle monitor to warn of nearby risks and activate an external, audible alarm, while footage is also uploaded to Autonomise.ai.

It can locate people up to 20 metres away in real-time, accurately establishing the severity of risk dependent on their proximity to the vehicle, so drivers have more time to react.

Transforming video telematics with AI

Taking advantage of smart cameras and using video telematics like never before. Using increasingly sophisticated AI technologies, VisionTrack is automating management processes, data analysis and incident detection in a wide range of fleet, insurance, road safety and risk management settings.